To the voters who no longer believe in what the politicians It is now their turn to discern whether even what they hear them say is real.

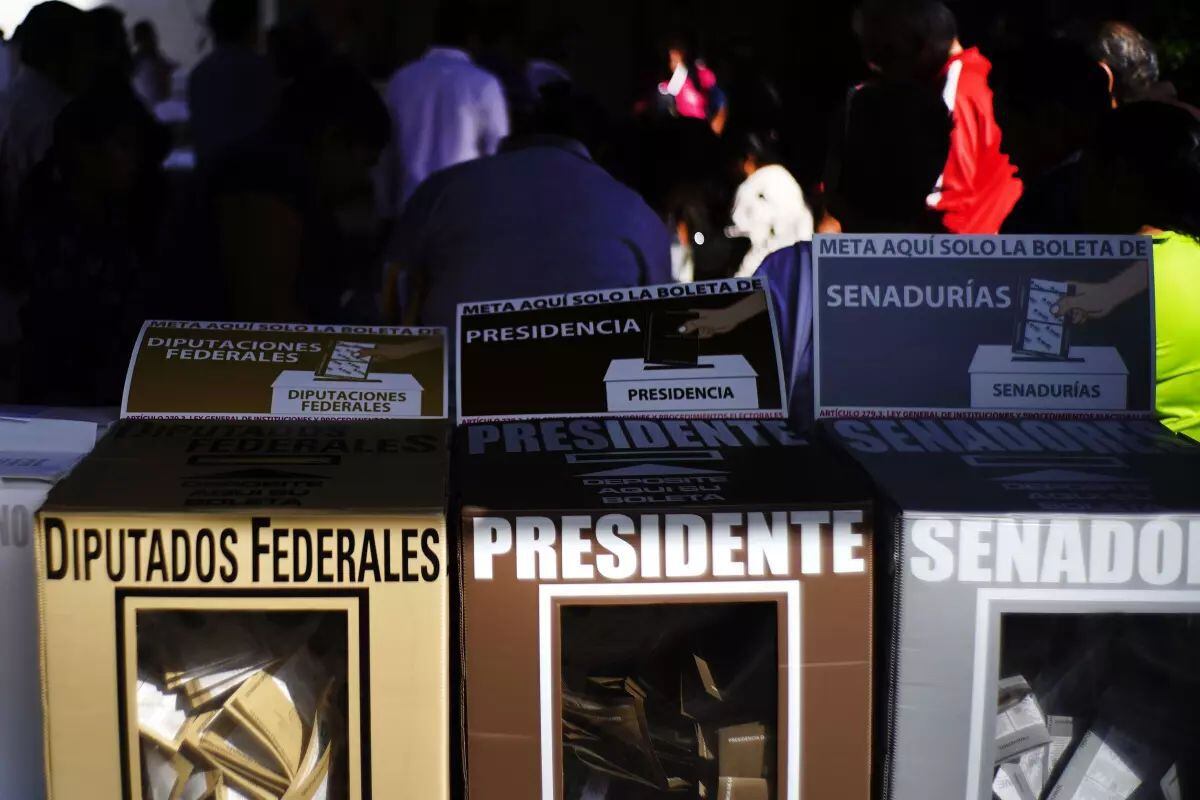

Audios generated with artificial intelligence, which simulate voices of candidates or leaders, have arrived in Mexico and have already crept into politics, half a year before the presidential elections in which the ruling party of Andrés Manuel López Obrador – who cannot be re-elected — his permanence in power is at stake.

The most recent example, which is related to internal struggles in the local ruling party in Mexico City, has raised uncertainty about what is real and what is not in political campaigns. It has also raised the debate about whether it is possible to have a totally accurate answer about these materials.

Artificial intelligence experts already warn of the threat: neither the human ear can distinguish original audio from the recreated ones nor can technology guarantee verification. 100% reliable.

The difficulty of verifying them contrasts with the simplicity with which they can be created with artificial intelligence.

The circulation of deepfake audios—which imitate until they supplant the tone and identity of a public figure— “it puts the information ecosystem at risk, because people begin to make distorted decisions or begin to contaminate the public sphere”Jesús González, an academic technician at the UNAM Legal Research Institute focused on artificial intelligence, told AP.

“It can generate spaces of distrust where we do not know well what is real and what is false,” he added.

Miguel Ángel Romero, political analyst and consultant, warns that these audios are shared in a context where leaks also proliferate in Mexico: authorities that disseminate private conversations and government campaigns that issue misleading information.

“The next electoral process will be deepfakes and we will be listening to false conversations between candidates and opinion leaders,” Romero diagnosed. The analyst also warns that there are audios obtained through espionage and that they are denied hiding behind artificial intelligence. It may happen, he believes, that “the Mexican politician, within his cynicism, says that it is deepfake, that it is false”.

In fact, the latest controversy occurred this week. An audio attributed to the Citizen Movement presidential candidate for the 2024 elections, Samuel García, in which a man’s voice with a northern accent is heard who, apparently drunk, insults and threatens a woman. Jorge Álvarez, García’s pre-campaign coordinator, indicated that this audio had already been denied in 2021.

In fact, it was already viral on networks in 2018 and then it was not linked to García, whom it did intend to harm now.

Technological advances and AI are not perceived as a threat per se. On the contrary, politicians around the world have incorporated artificial intelligence to define their communication strategies, but alerts have been raised regarding malicious use that seeks to misinform or harm someone.

According to Claudia Flores Saviaga, senior researcher at the Civic AI Lab at Northeastern University, in Boston, United States, she told AP that this is the first time that deepfakes have been detected in the Mexican elections. She attributed this to a large-scale adoption of these technologies.

“It is very dangerous when it comes to elections or critical events like these”he commented. “It is something that has created alert not only to governments, but to all types of organizations due to the implications of creating an environment in which you will not be able to distinguish what is true and what is not,” he added.

Although there are some clues to identify fake audio, such as the breathing and pauses that a person makes, the human ear and the technologies on their own are not enough to detect a cloned voice with complete certainty.

Manel Terrazas, founder of Loccus. ai, a company that offers a cloned voice detection service, recognized that to date no technology can completely define whether an audio is real.

“In the end you have to draw a parallel with any security system. There is no system 100% sure”, he commented. “It will always be a probabilistic exercise. It will never be deterministic”.

“We are now at a time in which, for a person, being able to identify whether a piece of content, whether image, video or in this case audio, is real or not, is practically impossible”said.

And this is what happened with the most recent case in Mexico. A recording released seemed to demonstrate the interference of Martí Batres, current head of government of Mexico City, in the internal process to elect the next candidate of the ruling Morena party in the capital.

His voice called to support Clara Brugada, former mayor of Iztapalapa and who was finally chosen as the competitor, over Omar García Harfuch, former Secretary of Security.

Batres dismissed the alleged leak and said the audio had been generated by artificial intelligence. An analysis with the Loccus tool showed that the audio was most likely artificial.

However, social media users continue to doubt and some even defend the authenticity of the audio weeks later.

In this context, the Morenoist deputy Manuel Torruco presented an initiative before the Legislature that proposes punishing with prison those who use this technology to modify videos, audios, people’s faces, voice recordings with the intention of passing them off as real.

And in the United States, the Federal Election Commission began a process to potentially regulate AI-generated deepfakes in political ads ahead of the 2024 election.

For its part, YouTube will require creators to specify whether they have used generative artificial intelligence to create realistic-looking videos. If they do not do so, they will face sanctions that include the removal of their content or suspension from the platform, which also leads to their exit from the revenue sharing program.

Source: Gestion

Ricardo is a renowned author and journalist, known for his exceptional writing on top-news stories. He currently works as a writer at the 247 News Agency, where he is known for his ability to deliver breaking news and insightful analysis on the most pressing issues of the day.